Recently, I made a comment over on Steve Heard’s Scientist Sees Squirrel blog:

I have never published a paper that’s not been improved, to some degree, by peer review, and broadly the system works. But I do wonder if it’s sustainable in the long-term and whether in the future LLMs might actually be a more effective way of assessing manuscripts. I recognise that’s (currently) a controversial statement to make – but having recently run a few of my own manuscripts through ChatGPT and asked for its “opinion”, I can honestly say that the feedback has improved not just the writing but also the framing and focus of the work. It’s also picked up weaknesses and errors that I had otherwise missed.

That initiated an email conversation with Steve which resulted in me running a short experiment with ChatGPT model 5.5. I first loaded up the original manuscript that I’d submitted to a journal of this paper on pollinator effectiveness. I then asked ChatGPT to write a review of the manuscript as though it was a peer reviewer of the journal. Which it did – in some detail – in 28 seconds! If anyone is interested I can send them that review, but it’s the next bit that I think is especially interesting.

After ChatGPT had completed the review, I then uploaded the actual peer reviews I’d received from the journal, plus the editor’s comments, and asked it to summarise the degree to which its review agreed with those I had received.

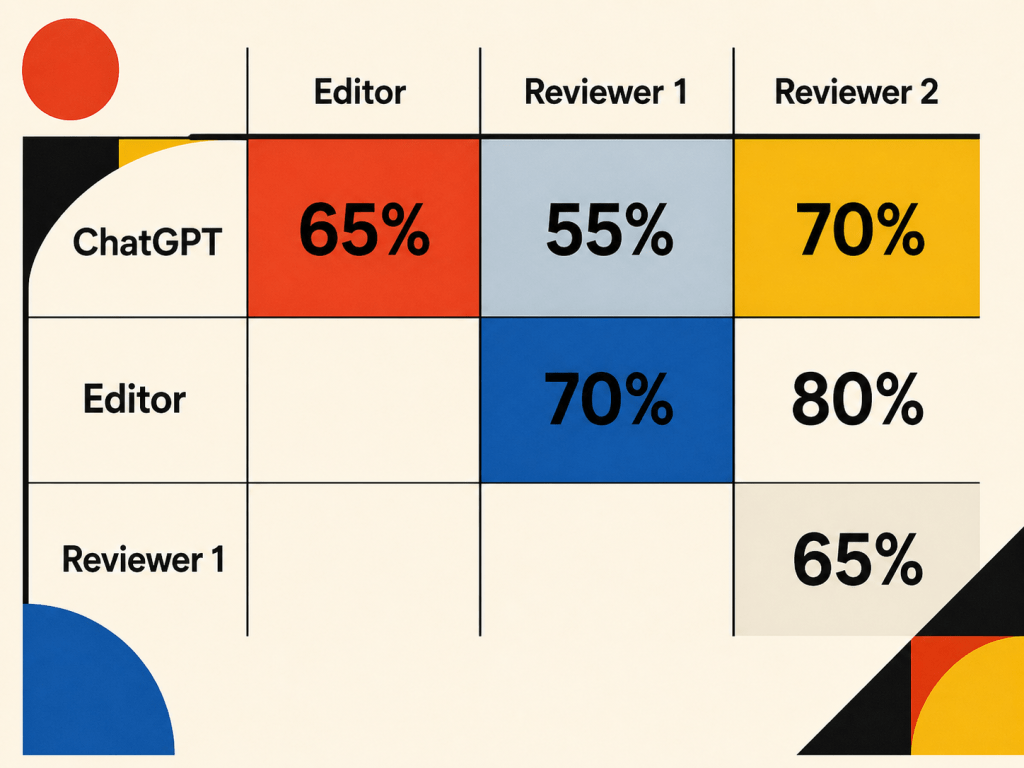

Below I have copied the response, but it’s the take-home message, summarised in the graphic above, which I think is the most intriguing aspect: there was a 55% agreement with Reviewer 1, a 70% agreement with Reviewer 2, and a 65% overall agreement between ChatGPT’s assessment of the manuscript and that of the Editor.

That may not sound like a large overlap, but note that it’s the level of agreement between the two reviewers was only 65%! In my not inconsiderable experience (>130 peer reviewed papers and chapters, hundreds of peer reviews of my own) this is fairly typical – reviewers ALWAYS pick up on different strengths and weaknesses in a manuscript!

What particularly impressed me about ChatGPT’s response below, is that it went far beyond simply looking at the language being used, it provided a critique of how the study was conducted, how data were analysed, and the conclusions drawn. Which is just what we expect a human reviewer to do! Not only that, but it was more critical of the work than the reviewers and the editor.

So where does this leave us? Could Large Language Models (LLMs) like ChatGPT replace part of the academic peer-review process? Clearly there need to be guide rails and human agency involved in final decisions, but I think that, yes, they may well have a role to play in the not-too-distant future. As always, I’d be interested in your comments.

If you want to dive into this more deeply, here’s the verbatim account of what ChatGPT wrote (including its emboldened parts) when I asked how much agreement there was between its assessment and those of the other two reviewers and the editor:

There is a fair amount of overlap, but not a perfect match.

I would say my review was moderately similar in substance, but noticeably more critical in tone and in editorial recommendation. The strongest areas of convergence were on the paper’s core strengths and on one key statistical concern. The biggest mismatch is that the actual editor and reviewers were much more positive overall, and most of their requested changes are closer to clarification, presentation, and framing than to a deep challenge to the paper’s publishability.

The clearest points of agreement are these. First, I highlighted the manuscript’s strengths as the multi-year design, the focus on different functional groups, and the interesting role of Lepidoptera; that aligns very closely with the guest editor, who explicitly says that “the roles of lepidopterans, the inclusion of flight distance measures, and the multi-year study are particularly notable,” and with Reviewer 2, who lists the multi-year field study, multiple pollinator groups, and the unexpected importance of Lepidoptera as strengths. Second, I flagged the Poisson treatment of an ordinal flight-distance variable as statistically weak; Reviewer 1 independently makes essentially the same point and explicitly recommends ordinal regression instead. Third, I thought the discussion was somewhat over-extended and not always tightly aligned with the evidence; Reviewer 2 likewise suggests embedding the work better in the broader literature and moving some material out of the conclusion into the discussion.

There is also a partial overlap around the manuscript’s central interpretation. I argued that the paper sometimes pushes too hard from pollen deposition and coarse movement classes to larger claims about true generalisation, outcrossing value, and broader evolutionary or environmental buffering. The editor does not object to those broad claims in the same way; instead, the editor actually asks for the discussion to connect the results more explicitly to the theoretical statement that plants should generalise on equally effective pollinators under dynamic environments, and even invites discussion of anthropogenic disturbance and future interactions. In other words, where I wanted that interpretive arc toned down, the editor mostly wanted it better developed and better supported.

The main differences are quite important.

First, I was more sceptical about inference than the real reviewers were. I was worried that the manuscript moved too quickly from non-significant or coarse flight-distance patterns to statements about butterfly superiority, reduced geitonogamy, and broader adaptive significance. Reviewer 1 only objected narrowly to the modelling choice; neither reviewer makes my broader inferential critique, and the editor seems broadly comfortable with the interpretive direction so long as it is supported with more descriptive data and clearer exposition.

Second, I was more concerned about the coarse pooling of visitor taxa. I thought the manuscript should be more cautious because “butterflies,” “hoverflies,” “bumblebees,” and especially “Others” are broad bins. The actual comments do touch this, but in a softer, more taxonomic way: the editor asks whether there is a species or morphotype list, and Reviewer 2 asks for more detail on the butterfly taxa and justification for putting solitary bees into “Others.” That is related to my concern, but it is narrower and more presentational than the conceptual objection I raised.

Third, I did not anticipate how much of the real feedback would focus on descriptive content and figures. The editor repeatedly asks for more floral biology data, clearer results presentation, more absolute numbers, more behavioural quantification, clearer site description, and better figure design and consistency. Reviewer 2 similarly asks for effect sizes, better figures, units, and clearer display of the underlying data distribution. I mentioned presentational issues only in a general way; the actual reports are much more detailed and concrete here.

Fourth, I did not raise the absence of random effects, whereas Reviewer 2 does. That is probably the single most important substantive point that I missed. Reviewer 2 specifically asks why insect individual and/or observation day were not modelled as random effects.

Fifth, my editorial recommendation was harsher. I recommended major revision. The actual reports read much more like minor-to-moderate revision. The editor calls it “an excellent piece of work” and says it is well executed with robust methodology; Reviewer 2 says they have “little to criticise,” mostly editorial suggestions plus a few statistical and figure issues; Reviewer 1 has only a short list of points. That collective tone is clearly more encouraging than mine.

So, in condensed form:

- Where I matched well: multi-year dataset as a major strength; Lepidoptera result as a notable contribution; the Poisson-for-ordinal issue; some concern that discussion/framing needed tightening.

- Where I was too severe: I treated the interpretive overreach as a major obstacle, whereas the real reviewers mostly saw a strong paper needing clarification and enrichment rather than retrenchment.

- What I underplayed or missed: requests for more descriptive natural history and floral biology data, much more detailed figure/presentation criticism, and the random-effects issue.

My bottom-line judgement is that my review was directionally right on some key scientific points, especially the statistical issue and the general strengths, but too pessimistic about the paper’s editorial position. If I had seen these reports first, I would have shifted from “major revision because the evidence-conclusion fit is shaky” to something more like “moderate revision: good paper, but it needs clearer descriptive support, better figure presentation, and tighter statistical justification. If revised along those lines, I think the manuscript could become a credible and interesting contribution”.

Here is a concise version of the editorial decision in journal-review language:

This manuscript addresses an interesting and worthwhile question using a useful multi-year dataset and a stronger-than-usual measure of pollinator performance. However, the current version over-interprets some of the results, particularly those concerning movement behaviour, outcrossing implications, and the broader adaptive significance of generalised pollination. The statistical treatment of flight distance is also not fully convincing. I therefore recommend major revision. The manuscript has clear potential, but its conclusions need to be more tightly aligned with what the data actually demonstrate.

Excellent post and thoughtful reflection, Jeff!

It made me consider that one potential advantage of LLM-based reviews, compared to human reviews, is their capacity for greater neutrality and consistency, which can sometimes be difficult for humans to maintain. In this sense, LLMs may help reduce biases or personal preferences toward authors, regardless of their surnames and geographic origin. From my perspective, this could be particularly valuable, as researchers in Latin America (and many other regions!) often encounter challenges in the review process that may be less familiar to colleagues working in developed regions of the Global North.

Thanks Felipe, you make a great point there. Yes, the neutrality aspect could be important as long as the LLM is not trained on biased peer reviews!

This is indeed interesting, Jeff. I have no problem believing that a ChaptGPT “review” could be useful – any kind of feedback from anywhere can be useful! And I’m fairly impressed with the depth that “review” manages to have. But here’s the thing: I’m impressed for the same reason I put “review” in scare quotes. It isn’t one. ChatGPT cannot provide “a critique of how the study was conducted, how data were analysed, and the conclusions drawn.” It doesn’t know anything about data analysis or logical inference. It’s a large language model: it does next-token prediction AND THAT IS ALL. It can produce sequences of words that look a lot like the sequences of words reviewers might produce, given a draft.

Now, what’s impressive is that, by doing only next-token prediction, it’s managed to make some useful suggestions! That’s a bit counterintuitive, but it accords with the fact that ChatGPT is often able to do a decent job helping a manuscript get better structure and logical flow. It’s clearly too simplistic to think of next-token prediction only in terms of a short sequence of words. The problem is that of course this tempts too many people to think that ChatGPT is doing something more than next-token prediction – that it is actually providing a critique, or has opinions, or similar. And of course it isn’t and doesn’t, and you know that as well as I do! But it’s tempting, isn’t it?

I’m amused that ChatGPT says “I did not raise the absence of random effects, whereas Reviewer 2 does. That is probably the single most important substantive point that I missed”. ChatGPT doesn’t know what random effects are, or whether a point about them is substantive or not!

So my take is pretty simple: yes, an LLM can give you surprisingly useful advice about a manuscript! But there’s no reason to take its critiques seriously; when they match actual reviewers that’s basically a statistical coincidence. A useful one, to be sure!

Thanks for the comment Steve. So, as we’ve previously discussed, it seems to me that the latest versions of ChatGPT are doing a bit more than statistical predicting. That’s borne out by Google’s AI (!) assessment of it capabilities, that “GPT-5.5 acts as an intelligent agent that manages the entire workflow of a task, rather than just predicting the next word in a sequence….Based on the latest reports from April 2026, GPT-5.5 (and its predecessor, GPT-5.4) does significantly more than simple next-token prediction by acting as an agentic system that can autonomously plan and execute complex tasks. While the underlying mechanism still operates as a probabilistic model, GPT-5.5 operates within an integrated ecosystem that allows it to use tools, browse the web, and analyze data over long horizons.”

Now, we can debate what all of that means, but it’s clearly more than just predicting the next word. But even if that were the case, your comment about statistical coincidence is only true insofar as we know what a reviewer has said. If we didn’t have that reviewer’s report to compare with what ChatGPT came up with then we’d not be able to say anything about statistical coincidence, right?

I take your point about its use of “I” and, yes, it is tempting to think of it as an individual. But that’s how we, as humans, interact with a lot of the non-human world around us. I, for one, have trees that I regularly hug and refer to as old friends!

The other point I’d make is that we’re still very much at the start of this process of learning about the capabilities of such systems. We were hardly talking about this stuff two years ago and we’ve come a long way in a very short space of time!

PS – This is starting to get a bit meta, but I asked ChatGPT to take a look at the post and it picked up on your comment immediately. This is what it came up with:

“The main vulnerability is that the post sometimes glides from “useful output” to “therefore something review-like is happening under the hood.” Steve Heard’s comment gets right at that distinction, and at present his objection is probably the sharpest challenge to the piece. The post itself is careful, but some readers will still feel that it anthropomorphises the model a little too readily, especially in phrases like “its opinion” or “what ChatGPT wrote” as if that implies judgement in the human sense. You partly address that in the comments, but a sentence in the main post explicitly distinguishing “useful evaluative output” from “human understanding” would make the argument harder to caricature.”

You and ChatGPT are clearly on the same wavelength!